Implement Robotics Vision and Perception in LabVIEW

Introduction

Robotics vision and perception are critical components in the field of robotics, enabling machines to interpret and interact with their environment. LabVIEW (Laboratory Virtual Instrument Engineering Workbench) by National Instruments is a robust graphical programming environment that is widely used in engineering and scientific applications. Integrating vision and perception capabilities in LabVIEW allows for the development of sophisticated robotic systems with applications ranging from industrial automation to autonomous vehicles.

This comprehensive guide will delve into the concepts, tools, and methodologies for implementing robotics vision and perception in LabVIEW. We will cover the following topics:

- Fundamentals of Robotics Vision and Perception

- Overview of LabVIEW for Robotics

- Hardware and Software Requirements

- Image Acquisition and Processing in LabVIEW

- Object Detection and Recognition

- 3D Vision and Depth Perception

- Real-Time Vision Processing

- Case Studies and Applications

- Challenges and Future Directions

1. Fundamentals of Robotics Vision and Perception

1.1. Basics of Machine Vision

Machine vision involves the use of cameras and image processing algorithms to interpret visual information. In robotics, machine vision systems are used for tasks such as navigation, inspection, manipulation, and interaction with objects. Key components of machine vision include:

- Image Acquisition: Capturing images using cameras or sensors.

- Image Processing: Applying algorithms to enhance, filter, and extract information from images.

- Object Detection: Identifying and locating objects within an image.

- Object Recognition: Classifying objects based on their features.

- 3D Vision: Understanding the depth and spatial relationships in a scene.

1.2. Perception in Robotics

Perception in robotics extends beyond vision, encompassing various sensors and modalities to understand the environment. This includes:

- Visual Perception: Using cameras to interpret visual data.

- Lidar and Radar: Using light or radio waves to measure distances and create 3D maps.

- Tactile Sensors: Detecting touch and pressure.

- Auditory Sensors: Processing sound for localization and recognition.

Combining data from multiple sensors, known as sensor fusion, enhances the robot’s perception capabilities, enabling more accurate and reliable interpretations of the environment.

2. Overview of LabVIEW for Robotics

2.1. Introduction to LabVIEW

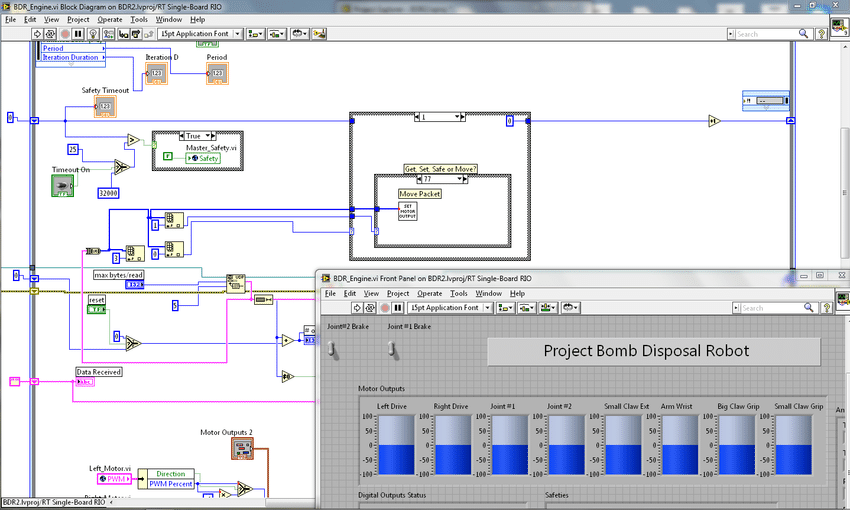

LabVIEW is a graphical programming language that uses a dataflow model for designing and implementing systems. It is particularly well-suited for applications that require interfacing with hardware, data acquisition, and real-time processing. LabVIEW programs, known as Virtual Instruments (VIs), consist of a block diagram (code) and a front panel (user interface).

2.2. LabVIEW Robotics Module

The LabVIEW Robotics Module extends LabVIEW’s capabilities to design and deploy robotic systems. It provides tools and libraries for:

- Simulation and Modeling: Creating virtual prototypes and testing algorithms.

- Sensor Integration: Interfacing with various sensors and actuators.

- Control Algorithms: Implementing control logic for robot movement and behavior.

- Path Planning: Designing algorithms for navigating through environments.

2.3. Vision Development Module (VDM)

The Vision Development Module (VDM) in LabVIEW provides comprehensive tools for developing vision applications. It includes functions for image acquisition, processing, analysis, and display. Key features of VDM include:

- Image Acquisition: Support for various camera interfaces such as USB, GigE, and FireWire.

- Image Processing: Functions for filtering, morphology, edge detection, and transformations.

- Pattern Matching: Algorithms for recognizing patterns and shapes.

- 3D Vision: Tools for stereo vision and depth measurement.

3. Hardware and Software Requirements

3.1. Hardware Components

To implement robotics vision and perception in LabVIEW, you will need the following hardware components:

- Cameras: Various types of cameras can be used, including USB cameras, GigE cameras, and stereo vision cameras. The choice depends on the application requirements such as resolution, frame rate, and field of view.

- Frame Grabbers: Hardware devices that capture and digitize video frames from analog or digital cameras. National Instruments offers a range of frame grabbers compatible with LabVIEW.

- Robotic Platforms: This includes robotic arms, mobile robots, or custom-built robots equipped with necessary sensors and actuators.

- Sensors: Additional sensors such as lidar, ultrasonic sensors, and IMUs (Inertial Measurement Units) may be used to complement vision data.

3.2. Software Components

The primary software components required are:

- LabVIEW: The core development environment.

- Vision Development Module (VDM): For image acquisition and processing.

- Robotics Module: For designing and controlling robotic systems.

- Driver Software: Necessary drivers for cameras and other hardware components.

4. Image Acquisition and Processing in LabVIEW

4.1. Image Acquisition

Image acquisition involves capturing images from cameras and making them available for processing. In LabVIEW, this is typically done using the Vision Acquisition Software (VAS) which supports a variety of camera interfaces. Steps involved in image acquisition include:

- Camera Configuration: Setting parameters such as exposure, gain, and frame rate.

- Image Capture: Acquiring images either continuously or on-demand.

- Image Storage: Saving captured images to disk or memory for further processing.

4.2. Image Processing

Image processing involves manipulating and analyzing images to extract useful information. LabVIEW VDM provides a rich set of functions for image processing, including:

- Filtering: Techniques such as Gaussian blur, median filtering, and sharpening to enhance image quality.

- Morphology: Operations like erosion, dilation, opening, and closing for shape analysis.

- Edge Detection: Algorithms like Sobel, Canny, and Laplacian to detect edges and boundaries.

- Color Analysis: Functions to analyze and manipulate color spaces, such as RGB to HSV conversion.

4.3. Feature Extraction

Feature extraction is the process of identifying important characteristics or features within an image. Common features include edges, corners, blobs, and textures. LabVIEW provides functions for:

- Edge Detection: Identifying edges using gradient-based methods.

- Corner Detection: Finding corners and key points using algorithms like Harris or Shi-Tomasi.

- Blob Analysis: Detecting and analyzing connected regions in a binary image.

- Texture Analysis: Evaluating the texture properties of an image region.

5. Object Detection and Recognition

5.1. Object Detection

Object detection involves identifying and locating objects within an image. Techniques used for object detection include:

- Thresholding: Converting an image to binary based on intensity values to isolate objects.

- Contour Detection: Finding and analyzing contours to detect object boundaries.

- Template Matching: Using predefined templates to locate objects within an image.

5.2. Object Recognition

Object recognition involves classifying objects based on their features. Methods for object recognition include:

- Pattern Matching: Matching patterns or shapes using algorithms like normalized cross-correlation.

- Feature Matching: Matching features such as keypoints and descriptors using algorithms like SIFT (Scale-Invariant Feature Transform) or SURF (Speeded-Up Robust Features).

- Machine Learning: Using machine learning models trained on labeled data to recognize objects. This can include techniques like neural networks and support vector machines.

5.3. Implementing Object Detection and Recognition in LabVIEW

In LabVIEW, object detection and recognition can be implemented using VDM functions. Steps include:

- Preprocessing: Apply image processing techniques to enhance and prepare the image.

- Feature Extraction: Extract relevant features from the image.

- Matching: Use pattern or feature matching algorithms to detect and recognize objects.

- Verification: Validate the results to reduce false positives and improve accuracy.

6. 3D Vision and Depth Perception

6.1. Stereo Vision

Stereo vision involves using two cameras to capture images from slightly different viewpoints. By analyzing the disparity between these images, it is possible to estimate depth and create a 3D map of the scene. LabVIEW supports stereo vision through the VDM, providing functions for:

- Calibration: Calibrating the stereo camera setup to correct for lens distortion and alignment.

- Rectification: Aligning the stereo images to simplify disparity calculation.

- Disparity Map: Computing the disparity between the stereo images to estimate depth.

- 3D Reconstruction: Creating a 3D model or point cloud from the disparity map.

6.2. Depth Sensors

Depth sensors, such as lidar or structured light cameras, provide direct measurements of distance to objects in the environment. These sensors can be integrated with LabVIEW to enhance robotic perception. LabVIEW provides tools for:

- Sensor Integration: Interfacing with depth sensors and acquiring data.

- Point Cloud Processing: Analyzing and processing 3D point clouds.

- Obstacle Detection: Identifying and avoiding obstacles using depth data.

7. Real-Time Vision Processing

7.1. Requirements for Real-Time Processing

Real-time vision processing is crucial for applications that require immediate responses, such as autonomous vehicles and robotic manipulation. Key requirements include:

- Low Latency: Minimizing the delay between image capture and processing.

- High Throughput: Processing a high volume of images or frames per second.

- Deterministic Performance: Ensuring consistent and predictable processing times.

7.2. Techniques for Real-Time Processing in LabVIEW

LabVIEW supports real-time processing through features such as:

- Parallel Execution: Utilizing multiple cores and processors to execute tasks in parallel.

- Real-Time Operating Systems (RTOS): Deploying vision algorithms on real-time hardware platforms such as CompactRIO.

- FPGA Acceleration: Offloading computationally intensive tasks to FPGAs (Field-Programmable Gate Arrays) for faster processing.

7.3. Optimization Strategies

Optimizing vision algorithms for real-time performance involves:

- Efficient Algorithms: Using algorithms with lower computational complexity.

- Memory Management: Reducing memory usage and avoiding unnecessary data copying.

- Code Profiling: Identifying and optimizing bottlenecks in the code.

8. Case Studies and Applications

8.1. Industrial Automation

In industrial automation, machine vision systems are used for quality control, inspection, and robot guidance. Case studies include:

- Assembly Line Inspection: Using vision systems to inspect products for defects and ensure quality standards.

- Pick-and-Place Robots: Guiding robotic arms to accurately pick and place objects based on visual feedback.

8.2. Autonomous Vehicles

Autonomous vehicles rely heavily on vision and perception for navigation and obstacle avoidance. Case studies include:

- Self-Driving Cars: Implementing vision algorithms to detect lanes, traffic signs, pedestrians, and other vehicles.

- Drones: Using stereo vision and depth sensors for obstacle detection and terrain mapping.

8.3. Medical Robotics

In medical robotics, vision systems enhance the precision and safety of surgical procedures. Case studies include:

- Surgical Robots: Using 3D vision to guide surgical instruments and perform minimally invasive surgeries.

- Medical Imaging: Processing medical images for diagnostics and treatment planning.

9. Challenges and Future Directions

9.1. Challenges

Implementing robotics vision and perception in LabVIEW poses several challenges:

- Complexity: Developing and integrating sophisticated vision algorithms can be complex and time-consuming.

- Performance: Achieving real-time performance requires careful optimization and hardware acceleration.

- Robustness: Ensuring reliable performance in diverse and dynamic environments is challenging.

9.2. Future Directions

Future advancements in robotics vision and perception in LabVIEW may include:

- AI and Machine Learning: Integrating advanced AI algorithms to enhance object detection, recognition, and decision-making.

- Edge Computing: Deploying vision algorithms on edge devices for faster and more efficient processing.

- Collaborative Robots: Developing vision systems for collaborative robots that can safely and effectively work alongside humans.

Conclusion

Implementing robotics vision and perception in LabVIEW provides powerful capabilities for developing advanced robotic systems. By leveraging the tools and techniques discussed in this guide, engineers and researchers can create robust and efficient vision systems for a wide range of applications. As technology continues to evolve, the integration of AI, machine learning, and edge computing will further enhance the potential of robotics vision and perception, paving the way for new and innovative solutions in the field of robotics.